Node.js Basics

Click ★ if you like the project. Your contributions are heartily ♡ welcome.

Related Topics

- HTML Basics

- CSS Basics

- JavaScript Basics

- SQL-Server Basics

- MongoDB Basics

- Node.js APIs

- Node.js Commands

- Node.js Multiple Choice Questions

- Node.js Coding Practice

- Design Patterns

- Data Structures and Algorithms

Table of Contents

- Fundamentals & Core Concepts

- Core Modules & System Operations

- Web Development & APIs

- Reliability & Maintenance

- Advanced Optimization & Security

# 1. INTRODUCTION

Q. What is Node.js?

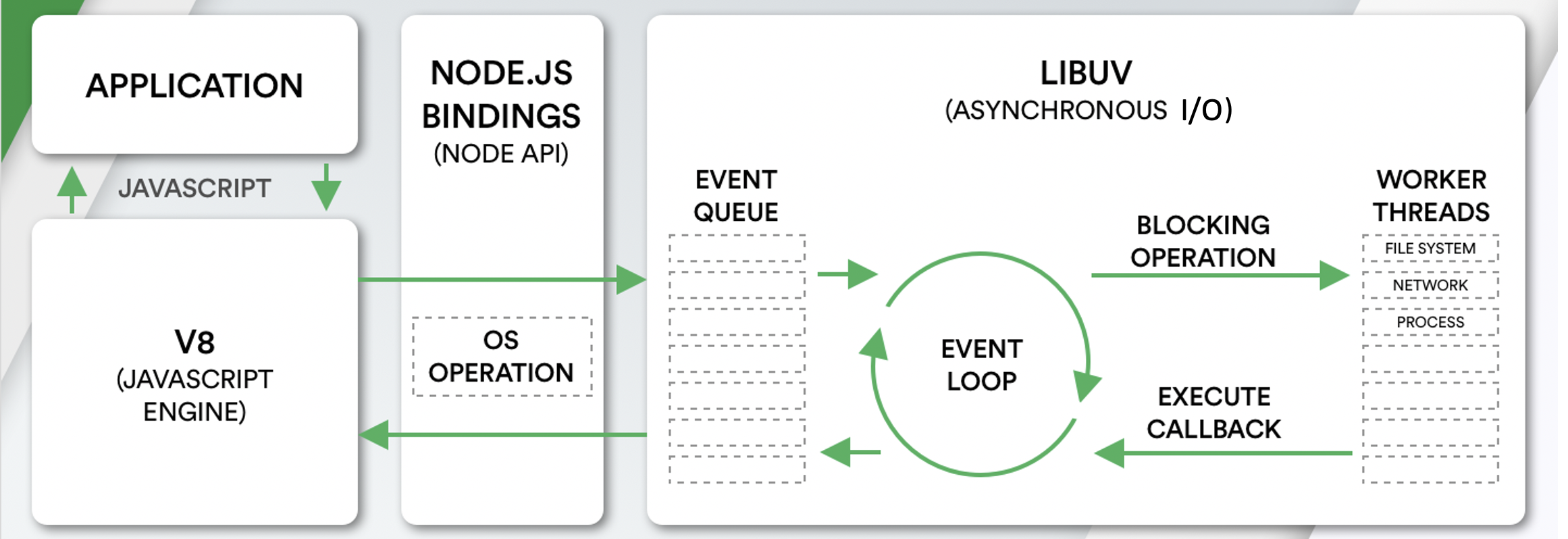

Node.js is an open-source server side runtime environment built on Chrome's V8 JavaScript engine. It provides an event driven, non-blocking (asynchronous) I/O and cross-platform runtime environment for building highly scalable server-side applications using JavaScript.

Q. What is Node.js Process Model?

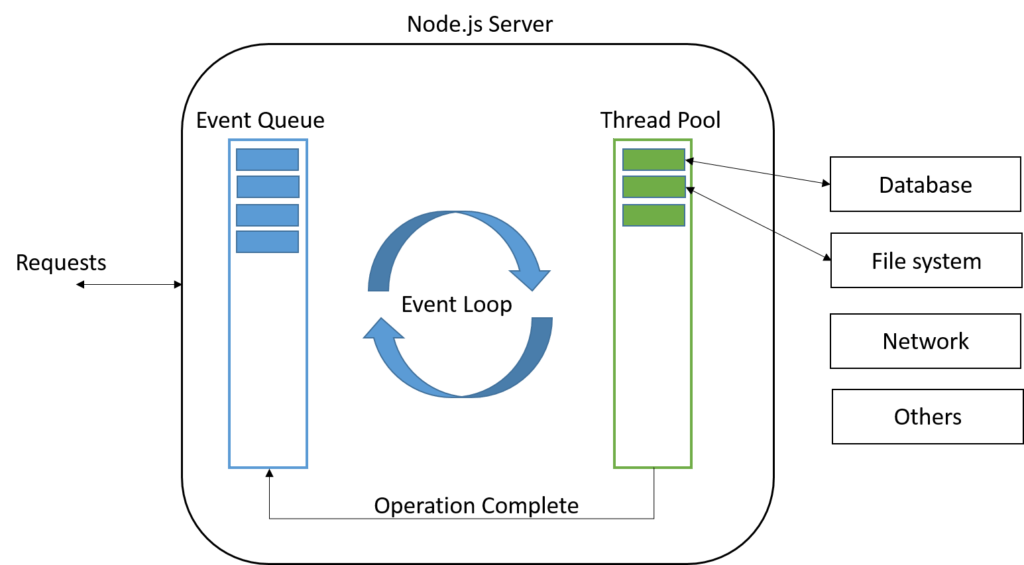

The Node.js Process Model is a single-threaded, event-driven architecture designed to handle many concurrent client requests efficiently. Unlike traditional web servers that create a new thread for every request, Node.js uses a Single-Threaded Event Loop to manage all non-blocking operations

Features:

- Runs in a single process with a single thread, requiring fewer resources than multi-threaded platforms

- All incoming requests are handled by that one thread

- Blocking I/O (file system, database, network) is handed off asynchronously — the thread doesn't wait for it to complete

- When async work finishes, a callback is queued and executed, and the response is sent back to the client

Flow:

- Client requests arrive and are placed in the Event Queue

- The Event Loop picks them up one by one

- If a request needs blocking I/O → it's assigned to a thread pool (libuv) in the background

- The main thread moves on to the next request immediately

- When the I/O completes, the callback fires and the response is returned

This is why Node.js is highly scalable for I/O-bound workloads — it never blocks waiting, unlike traditional thread-per-request servers.

Q. What are the key features of Node.js?

-

Asynchronous and Event driven – All APIs of Node.js are asynchronous. This feature means that if a Node receives a request for some Input/Output operation, it will execute that operation in the background and continue with the processing of other requests. Thus it will not wait for the response from the previous requests.

-

Fast in Code execution – Node.js uses the V8 JavaScript Runtime engine, the one which is used by Google Chrome. Node has a wrapper over the JavaScript engine which makes the runtime engine much faster and hence processing of requests within Node.js also become faster.

-

Single Threaded but Highly Scalable – Node.js uses a single thread model for event looping. The response from these events may or may not reach the server immediately. However, this does not block other operations. Thus making Node.js highly scalable. Traditional servers create limited threads to handle requests while Node.js creates a single thread that provides service to much larger numbers of such requests.

-

Node.js library uses JavaScript – This is another important aspect of Node.js from the developer's point of view. The majority of developers are already well-versed in JavaScript. Hence, development in Node.js becomes easier for a developer who knows JavaScript.

-

There is an Active and vibrant community for the Node.js framework – The active community always keeps the framework updated with the latest trends in the web development.

-

No Buffering – Node.js applications never buffer any data. They simply output the data in chunks.

Q. How does Node.js work?

Node.js is completely event-driven and processes everything on a single thread using a non-blocking I/O model.

Node.js works asynchronously by using the event loop and callback functions, to handle multiple requests coming in parallel. An Event Loop is a functionality which handles and processes all your external events and just converts them to a callback function. It invokes all the event handlers at a proper time. Thus, lots of work is done on the back-end, while processing a single request, so that the new incoming request doesn't have to wait if the processing is not complete.

While processing a request, Node.js attaches a callback function to it and moves it to the back-end. Now, whenever its response is ready, an event is called which triggers the associated callback function to send this response.

Core Components:

| Component | Role |

|---|---|

| V8 Engine | Executes JavaScript on the server (same engine as Chrome) |

| libuv | C library that handles async I/O, thread pool, event loop |

| Event Queue | Holds incoming requests/events in FIFO order |

| Event Loop | Continuously polls the queue and dispatches callbacks |

| Thread Pool | Background workers (libuv) for blocking operations (file, DNS, crypto) |

Request Lifecycle:

- A request arrives → placed in the Event Queue

- The Event Loop picks it up (call stack must be empty)

- No blocking I/O? → Process immediately, send response

- Blocking I/O needed? → Offload to a thread pool worker, register a callback, free the main thread

- Worker completes → fires an event → callback executes → response sent

Key Design Principles:

- Single-threaded JS execution — no thread management overhead

- Non-blocking by default — APIs are async with callbacks/promises

- Observer pattern — events trigger registered listeners

- Concurrency without threads — achieved via the event loop + callbacks, not parallel execution

What it’s great at: High-concurrency I/O-bound workloads (APIs, real-time apps, microservices)

What it’s not ideal for: CPU-intensive tasks (use worker_threads or child_process for those)

Q. What is difference between process and threads in Node.js?

In Node.js, the main difference between a process (using the child_process or cluster modules) and a thread (using the worker_threads module) lies in how they handle memory and isolation.

Process

A process is an independent program instance with its own memory space.

- Created via

child_process.fork()— spawns a new V8 instance - Isolated memory — no sharing between processes

- Communicates via IPC (message passing)

- If it crashes, only that process is affected

- Higher creation overhead

Thread

A thread is a unit of execution within a process.

- Created via

worker_threadsmodule - Shared memory within the same process (via

SharedArrayBuffer) - Communicates via

postMessageor shared memory - A crash can affect the entire process

- Lower creation overhead

Comparison Table

| Process | Thread | |

|---|---|---|

| Memory | Isolated | Shared within process |

| Creation cost | Higher (new V8 instance) | Lower |

| Communication | IPC (message passing) | SharedArrayBuffer / postMessage |

| Crash impact | Only that process | Can crash entire process |

| Use case | Separate Node.js apps, external commands | CPU-intensive JS tasks |

When to use which?

- Use

child_process.fork()— when you need full isolation (separate scripts, different environments) - Use

worker_threads— for CPU-heavy JS tasks (image processing, parsing, crypto) where you want low overhead and optional memory sharing

Node.js's main event loop always runs on a single thread — neither approach blocks it when used correctly.

# 2. NODE.JS ARCHITECTURE

Q. How does Node.js works?

Node.js is single-threaded and event-driven, using a non-blocking I/O model to handle many concurrent operations efficiently.

Core Components

| Component | Role |

|---|---|

| V8 Engine | Executes JavaScript (same engine as Chrome) |

| libuv | C library handling async I/O, thread pool, and event loop |

| Event Queue | Holds incoming requests/events in FIFO order |

| Event Loop | Continuously polls the queue and dispatches callbacks |

| Thread Pool | Background workers for blocking operations (file, DNS, crypto) |

Request Lifecycle

- Request arrives → placed in the Event Queue

- Event Loop picks it up (only when the call stack is empty)

- No blocking I/O? → Process immediately, send response

- Blocking I/O needed? → Offload to a thread pool worker, register a callback, free the main thread

- Worker completes → fires an event → callback executes → response sent

Client Requests

↓

Event Queue

↓

Event Loop (single thread)

├── Non-blocking? → Process & respond immediately

└── Blocking I/O? → Thread Pool (libuv)

↓

Callback fired → response sent

Key Design Principles

- Single-threaded JS execution — no thread management overhead

- Non-blocking by default — APIs are async with callbacks/promises

- Observer pattern — events trigger registered listeners

- Concurrency without threads — achieved via event loop + callbacks, not parallel execution

Q. What are the core modules of Node.js?

Node.js has a set of core modules that are part of the platform and come with the Node.js installation. These modules can be loaded into the program by using the require function.

The following table lists some of the important core modules in Node.js.

| Name | Description |

|---|---|

assert |

It is used by Node.js for testing itself. |

buffer |

Handle raw binary data outside the V8 heap |

child_process |

Spawn child processes (exec, spawn, fork) |

cluster |

This module is used by Node.js to take advantage of multi-core systems, so that it can handle more load. |

console |

It is used to write data to console. Node.js has a Console object which contains functions to write data to console. |

crypto |

Cryptographic functions — hashing, encryption, HMAC |

http/https |

Create HTTP/HTTPS servers and make requests |

url |

It includes methods for URL resolution and parsing. |

querystring |

It includes methods to deal with query string. |

path |

Utilities for working with file and directory paths |

fs |

File system — read, write, update, delete, rename files. |

stream |

Readable, Writable, Duplex, and Transform streams |

worker_threads |

Run CPU-intensive JS in background threads |

util |

It includes utility functions useful for programmers. |

zlib |

It is used to compress and decompress data. |

Example:

const http = require('http');

const fs = require('fs');

const path = require('path');

const crypto = require('crypto');

// HTTP server

http.createServer((req, res) => {

res.writeHead(200, { 'Content-Type': 'text/plain' });

res.end('Hello World');

}).listen(3000);

// File path

const filePath = path.join(__dirname, 'data', 'file.txt');

// Read file

fs.readFile(filePath, 'utf8', (err, data) => {

if (err) throw err;

console.log(data);

});

// Hash a string

const hash = crypto.createHash('sha256').update('secret').digest('hex');

console.log(hash);

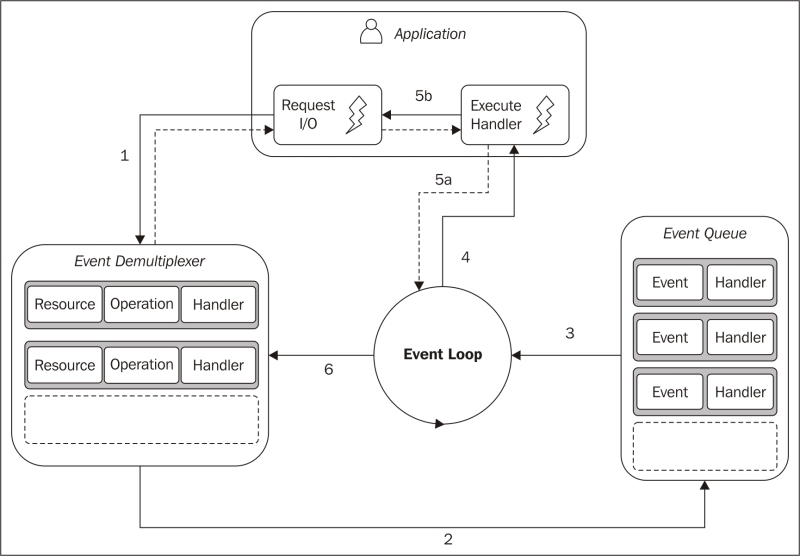

Q. What do you understand by Reactor Pattern in Node.js?

Reactor Pattern is used to avoid the blocking of the Input/Output operations. It provides us with a handler that is associated with I/O operations. When the I/O requests are to be generated, they get submitted to a de-multiplexer, which handles concurrency in avoiding the blocking of the I/O mode and collects the requests in form of an event and queues those events.

Types of Input/Output operations

1. Blocking I/O (Synchronous): Application will make a function call and pause its execution at a point until the data is received.

2. Non-Blocking I/O (Asynchronous): Application will make a function call, and, without waiting for the results it continues its execution. Node.js uses non-blocking I/O exclusively.

Reactor Pattern comprises of:

1. Resources: They are shared by multiple applications for I/O operations, generally slower in executions.

2. Synchronous Event De-multiplexer/Event Notifier: This uses Event Loop for blocking on all resources. When a set of I/O operations completes, the Event De-multiplexer pushes the new events into the Event Queue.

3. Event Loop and Event Queue: Event Queue queues up the new events that occurred along with its event-handler, pair.

4. Request Handler/Application: This is, generally, the application that provides the handler to be executed for registered events on resources.

Q. What are the global objects of Node.js?

Node.js Global Objects are the objects that are available in all modules. Global Objects are built-in objects that are part of the JavaScript and can be used directly in the application without importing any particular module.

Global Objects

| Object | Description |

|---|---|

global |

The global namespace object (equivalent to window in browsers) |

process |

Info and control over the current Node.js process |

console |

Write to stdout/stderr |

Buffer |

Handle raw binary data |

__dirname |

Absolute path of the current module’s directory |

__filename |

Absolute path of the current module’s file |

setTimeout / clearTimeout |

Schedule a one-time callback |

setInterval / clearInterval |

Schedule a repeating callback |

setImmediate / clearImmediate |

Execute after current event loop iteration |

queueMicrotask |

Queue a microtask |

URL / URLSearchParams |

Web-compatible URL API |

fetch |

HTTP client (available since Node.js v18) |

crypto |

Web Crypto API (available since Node.js v19) |

Examples:

// __dirname and __filename

console.log(__dirname); // D:\projects\myapp

console.log(__filename); // D:\projects\myapp\index.js

// process — runtime info

console.log(process.version); // v20.x.x

console.log(process.platform); // 'win32' / 'linux'

console.log(process.env.NODE_ENV); // 'development'

console.log(process.pid); // process ID

process.exit(0); // exit with code 0

// global — set a truly global variable (avoid in practice)

global.appName = 'MyApp';

console.log(appName); // 'MyApp' — accessible anywhere

// Buffer

const buf = Buffer.from('hello');

console.log(buf.toString('hex')); // 68656c6c6f

// Timers

const timer = setTimeout(() => console.log('done'), 1000);

clearTimeout(timer); // cancel it

__dirnameand__filenameare not available in ES Modules — useimport.meta.urlwithfileURLToPath()instead. Theglobalobject in Node.js v21+ is also aliased asglobalThis(the standard cross-environment global).

Q. What is chrome v8 engine?

V8 is an open-source, high-performance JavaScript and WebAssembly engine written in C++, developed by Google. It was originally designed for Google Chrome and Chromium-based browsers ( such as Brave ) in 2008, but it was later utilized to create Node.js for server-side coding.

V8 is the JavaScript engine i.e. it parses and executes JavaScript code. The DOM, and the other Web Platform APIs ( they all makeup runtime environment ) are provided by the browser.

V8 is known to be a JavaScript engine because it takes JavaScript code and executes it while browsing in Chrome. It provides a runtime environment for the execution of JavaScript code. The best part is that the JavaScript engine is completely independent of the browser in which it runs.

Just-In-Time (JIT) compilation:

V8 uses two compilers working together:

- Ignition — baseline interpreter that converts JS to bytecode quickly, collects profiling data

- TurboFan — optimizing compiler that re-compiles “hot” (frequently run) code into highly optimized machine code

V8 Key Optimizations

- Hidden classes — optimizes property access on objects

- Inline caching — caches results of repeated operations

- Garbage collection — generational GC (Scavenger + Mark-Compact) with minimal pause times

Q. Why is LIBUV needed in Node JS?

libuv is a cross-platform C library that gives Node.js its ability to perform non-blocking, asynchronous I/O — the core of everything Node.js does efficiently. It provides the following features:

- It allows the CPU and other resources to be used simultaneously while still performing I/O operations, thereby resulting in efficient use of resources and network.

- It facilitates an event-driven approach wherein I/O and other activities are performed using callback-based notifications.

- It provides mechanisms to handle file system, DNS, network, child processes, pipes, signal handling, polling and streaming

- It also includes a thread pool for offloading work for some things that can't be done asynchronously at the operating system level.

Example: Thread Pool

Some operations can't be made async at the OS level (e.g., some file system calls, DNS lookups, crypto). libuv offloads these to a pool of worker threads:

const fs = require('fs');

const crypto = require('crypto');

// These are offloaded to libuv\'s thread pool — main thread never blocks

fs.readFile('large-file.txt', (err, data) => {

console.log('File read complete');

});

crypto.pbkdf2('password', 'salt', 100000, 64, 'sha512', (err, key) => {

console.log('Hash complete');

});

console.log('Main thread continues immediately'); // prints first

Q. How V8 compiles JavaScript code?

Compilation is the process of converting human-readable code to machine code. There are two ways to compile the code

- Using an Interpreter: The interpreter scans the code line by line and converts it into byte code.

- Using a Compiler: The Compiler scans the entire document and compiles it into highly optimized byte code.

The V8 engine uses both a compiler and an interpreter and follows just-in-time (JIT) compilation to speed up the execution. JIT compiling works by compiling small portions of code that are just about to be executed. This prevents long compilation time and the code being compiles is only that which is highly likely to run.

Compilation Pipeline

JavaScript Source

↓

Parser

↓ generates

Abstract Syntax Tree (AST)

↓

Ignition (Interpreter)

↓ produces

Bytecode ←──────────────────────┐

↓ + profiling data │ de-optimize if

TurboFan (Optimizing Compiler) │ assumptions wrong

↓ │

Optimized Machine Code ─────────────┘

Stage 1 — Parsing

V8 reads JS source and builds an Abstract Syntax Tree (AST):

const x = a + b;

// AST (simplified):

// VariableDeclaration

// └── BinaryExpression (+)

// ├── Identifier (a)

// └── Identifier (b)

- Scanner tokenizes the raw text

- Parser builds the AST from tokens

- Pre-parser skips function bodies not yet called (lazy parsing — speeds up startup)

Stage 2 — Ignition (Interpreter)

Ignition converts the AST into bytecode — a compact, platform-independent instruction set:

// Bytecode for: return a + b

LdaNamedProperty a

Add b

Return

- Starts executing immediately — no wait for full compilation

- Collects type feedback (e.g., “a and b are always integers”)

- Much faster to generate than machine code

Stage 3 — TurboFan (Optimizing Compiler)

When a function becomes “hot” (called many times), TurboFan kicks in:

- Uses Ignition’s profiling data to make type assumptions

- Compiles to highly optimized native machine code

- Eliminates type checks, inlines functions, unboxes numbers

function add(a, b) { return a + b; }

add(1, 2); // interpreted by Ignition

add(3, 4); // still Ignition, profiling

add(5, 6); // ... "hot" → TurboFan optimizes assuming a,b are integers

add('x','y') // assumption broken → deoptimize back to Ignition

# 3. NODE.JS SETUP

Q. How to create a simple server in Node.js that returns Hello World?

Step 01: Create a project directory

mkdir myapp

cd myapp

Step 02: Initialize project and link it to npm

npm init

This creates a package.json file in your myapp folder. The file contains references for all npm packages you have downloaded to your project. The command will prompt you to enter a number of things.

You can enter your way through all of them EXCEPT this one:

entry point: (index.js)

Rename this to:

app.js

Step 03: Install Express in the myapp directory

npm install express --save

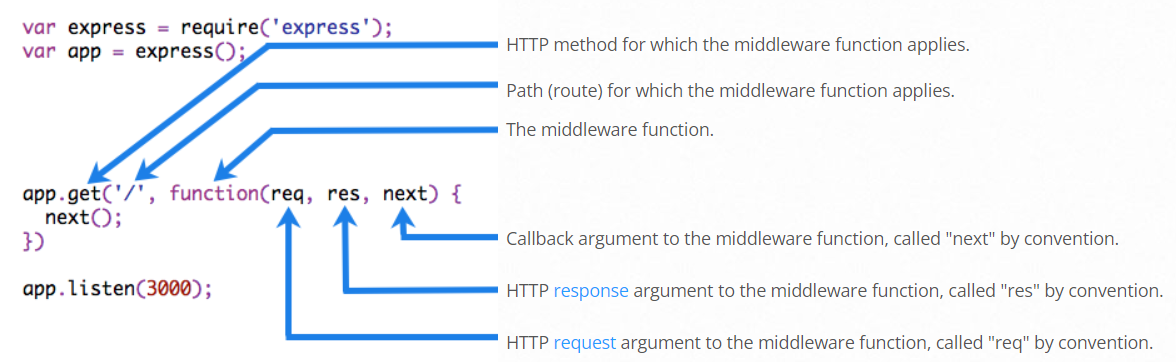

Step 04: app.js

/**

* Express.js

*/

const express = require('express');

const app = express();

app.get('/', function (req, res) {

res.send('Hello World!');

});

app.listen(3000, function () {

console.log('App listening on port 3000!');

});

Step 05: Run the app

node app.js

⚝ Try this example on CodeSandbox

Q. What is the difference between npm and npx?

npm (Node Package Manager) is used to install, share, and manage JavaScript packages. It is bundled with Node.js.

npx (Node Package Execute) is an npm package runner (available since npm v5.2) that lets you execute CLI packages without installing them globally.

| Feature | npm | npx |

|---|---|---|

| Purpose | Install and manage packages | Execute packages directly |

| Global install required | Yes, to use a CLI tool globally | No, runs temporarily |

| Version pinning | Via npm install |

Specify inline: npx pkg@version |

| Use case | npm install -g eslint |

npx eslint index.js |

Example:

// Install globally with npm, then use

npm install -g create-react-app

create-react-app my-app

// Use once without installing globally with npx (recommended)

npx create-react-app my-app

Q. What is the purpose of package.json in Node.js?

The package.json file is the manifest of a Node.js project. It holds metadata about the project and manages its dependencies, scripts, and configuration.

Example:

{

"name": "my-app",

"version": "1.0.0",

"description": "A Node.js application",

"main": "index.js",

"type": "module",

"scripts": {

"start": "node index.js",

"dev": "nodemon index.js",

"test": "jest"

},

"dependencies": {

"express": "^5.0.0"

},

"devDependencies": {

"jest": "^29.0.0",

"nodemon": "^3.0.0"

},

"engines": {

"node": ">=18.0.0"

},

"license": "MIT"

}

Important fields:

| Field | Description |

|---|---|

name |

The name of the package |

version |

Current version (follows semver: MAJOR.MINOR.PATCH) |

main |

Entry point for CommonJS modules |

type |

"module" for ES Modules, "commonjs" (default) for CJS |

scripts |

Shorthand commands runnable via npm run <script> |

dependencies |

Packages required in production |

devDependencies |

Packages only needed during development/testing |

engines |

Declares the Node.js version compatibility |

exports |

Controls public API surface of the package |

Q. What is package-lock.json and why is it important?

package-lock.json is an automatically generated file that locks the exact version of every installed package (including transitive dependencies) to guarantee reproducible installs across all environments and team members.

package.json |

package-lock.json |

|

|---|---|---|

| Written by | Developer | npm (auto-generated) |

| Contains | Version ranges (^1.2.3) |

Exact resolved versions |

| Purpose | Declare intent | Lock the dependency tree |

| Commit to git? | Yes | Yes |

Why it matters:

// package.json — "^1.2.3" allows 1.2.3 → 1.9.9

"dependencies": {

"express": "^4.18.0"

}

// package-lock.json — pins exactly what was installed

"node_modules/express": {

"version": "4.18.2",

"resolved": "https://registry.npmjs.org/express/-/express-4.18.2.tgz",

"integrity": "sha512-..."

}

Key npm commands:

npm install # installs using lock file if it exists (reproducible)

npm ci # strict clean install from lock file (best for CI/CD)

npm install pkg # adds/updates package and regenerates lock file

npm update # updates packages within semver ranges in package.json

npm ci vs npm install:

npm cideletesnode_modulesfirst, installs exact versions frompackage-lock.json, and fails if the lock file is out of sync — ideal for CI pipelines.npm installcreates or updatespackage-lock.jsonas needed.

Always commit

package-lock.jsonto version control so every developer and CI environment installs the identical dependency tree.

Q. What is semantic versioning in Node.js?

Semantic Versioning (SemVer) is a versioning scheme MAJOR.MINOR.PATCH used by npm packages to communicate the nature of changes in a release.

| Part | When to increment | Example |

|---|---|---|

| MAJOR | Breaking (backward-incompatible) changes | 1.0.0 → 2.0.0 |

| MINOR | New backward-compatible features | 1.0.0 → 1.1.0 |

| PATCH | Backward-compatible bug fixes | 1.0.0 → 1.0.1 |

Version range specifiers in package.json:

| Specifier | Meaning | Example resolves to |

|---|---|---|

1.2.3 |

Exact version only | 1.2.3 |

^1.2.3 |

Compatible with 1.x.x (fixes + features, no breaking) |

>=1.2.3 <2.0.0 |

~1.2.3 |

Approximately (patch updates only) | >=1.2.3 <1.3.0 |

* or "" |

Any version (not recommended in production) | latest |

>=1.0.0 |

Greater than or equal | 1.0.0, 2.5.0, … |

1.2.x |

Any patch in 1.2 |

1.2.0, 1.2.9, … |

Example:

{

"dependencies": {

"express": "^4.18.0", // accepts 4.18.x, 4.19.x, … but NOT 5.x

"lodash": "~4.17.0", // accepts 4.17.x only

"uuid": "9.0.0" // pinned to exactly 9.0.0

}

}

Pre-release and build metadata:

1.0.0-alpha.1 pre-release (lower than 1.0.0)

1.0.0-beta.2

1.0.0+build.123 build metadata (ignored in comparisons)

# Check installed vs available versions

npm outdated

# View resolved version

npm list express

# 4. NODE.JS ENVIRONMENT & CONFIGURATION

Q. What are environment variables in Node.js and how to use them?

Environment variables are key-value pairs available to a process at runtime, stored outside the application code. In Node.js they are accessed via process.env. They are the standard way to configure application behaviour (ports, credentials, feature flags) across different environments (development, test, production) without changing code.

Example:

// Set via the terminal before running the app:

// PORT=4000 NODE_ENV=production node app.js

const port = process.env.PORT || 3000;

const env = process.env.NODE_ENV || 'development';

console.log(`Running on port ${port} in ${env} mode`);

Common environment variables in Node.js projects:

| Variable | Purpose |

|---|---|

NODE_ENV |

Runtime environment (development, test, production) |

PORT |

HTTP server port |

DATABASE_URL |

Database connection string |

JWT_SECRET |

Secret key for signing JSON Web Tokens |

LOG_LEVEL |

Logging verbosity (debug, info, error) |

Q. How to use the dotenv package in Node.js?

The dotenv package loads environment variables from a .env file into process.env, keeping secrets out of source code and making local configuration easy.

Installation:

npm install dotenv

.env file (never commit this to version control):

PORT=3000

NODE_ENV=development

DATABASE_URL=postgres://user:password@localhost:5432/mydb

JWT_SECRET=supersecretkey

app.js:

// Load .env as early as possible — before any other require()

require('dotenv').config();

const express = require('express');

const app = express();

const port = process.env.PORT || 3000;

app.get('/', (req, res) => {

res.send(`Environment: ${process.env.NODE_ENV}`);

});

app.listen(port, () => {

console.log(`Server running on port ${port}`);

});

Best practices:

# .gitignore — never commit secrets

.env

.env.local

.env.production

# .env.example — commit this template with placeholder values

PORT=3000

NODE_ENV=development

DATABASE_URL=postgres://user:password@localhost:5432/mydb

JWT_SECRET=

In production, set environment variables through the hosting platform (AWS Parameter Store, Heroku Config Vars, Kubernetes Secrets) instead of deploying a

.envfile.

Q. What is the purpose of NODE_ENV in Node.js?

NODE_ENV is a convention used by Node.js frameworks and libraries to alter their behaviour based on the runtime environment. It is not set automatically — you must define it explicitly.

Common values:

| Value | Usage |

|---|---|

development |

Verbose errors, hot reload, debug logging |

test |

Isolated databases, mocked services |

production |

Minified output, cached templates, suppressed stack traces |

Example — toggling behaviour:

require('dotenv').config();

const express = require('express');

const app = express();

// Express automatically disables view cache and enables verbose errors

// when NODE_ENV !== 'production'

console.log(`NODE_ENV: ${process.env.NODE_ENV}`);

// Custom behaviour based on environment

if (process.env.NODE_ENV === 'production') {

// Use a real database connection

app.use(require('./middleware/errorHandler')); // hides stack traces

} else {

// Use an in-memory SQLite database for development

app.use((err, req, res, next) => {

console.error(err.stack); // show full stack in dev

res.status(500).json({ error: err.message, stack: err.stack });

});

}

Setting NODE_ENV:

# Linux / macOS

NODE_ENV=production node app.js

# Windows PowerShell

$env:NODE_ENV="production"; node app.js

# Cross-platform via npm script (cross-env package)

npm install --save-dev cross-env

# package.json

"scripts": {

"start": "cross-env NODE_ENV=production node app.js",

"dev": "cross-env NODE_ENV=development nodemon app.js"

}

Q. How to manage configuration for different environments in Node.js?

A common pattern is a dedicated config module that reads from environment variables and provides typed, validated configuration to the rest of the application.

Example — config module:

// config/index.js

require('dotenv').config();

const config = {

app: {

port: parseInt(process.env.PORT, 10) || 3000,

env: process.env.NODE_ENV || 'development',

},

db: {

url: process.env.DATABASE_URL,

},

jwt: {

secret: process.env.JWT_SECRET,

expiresIn: process.env.JWT_EXPIRES_IN || '1d',

},

};

// Validate required variables at startup

const required = ['DATABASE_URL', 'JWT_SECRET'];

for (const key of required) {

if (!process.env[key]) {

throw new Error(`Missing required environment variable: ${key}`);

}

}

module.exports = config;

// app.js

const config = require('./config');

console.log(`Starting on port ${config.app.port}`);

Failing fast on missing variables at startup prevents hard-to-diagnose runtime errors.

# 5. NODE.JS DATA TYPES

Q. What are the data types in Node.js?

Node.js uses the same data types as JavaScript, categorized into Primitive and Non-Primitive (Reference) types, but it also introduces specialized classes like Buffer to handle server-side needs.

1. Primitives:

- String: Represents textual data (e.g., “Hello”).

- Number: Represents both integers and floating-point values. Node.js numbers are 64-bit double-precision floats.

- BigInt: Used for integers larger than (2⁵³ - 1), which is the limit for the standard Number type.

- Boolean: Represents a logical entity with two values: true or false.

- Undefined: A variable that has been declared but not assigned a value.

- Null: Represents the intentional absence of any object value.

- Symbol: Unique and immutable values often used as private identifiers for object properties.

2. Non-Primitive (Reference) Data Types:

- Object: The fundamental building block for all complex structures, used to store key-value pairs (e.g., { name: “Node”, version: 20 }).

- Array: A specialized type of object used to store ordered lists.

- Function: Reusable blocks of code that are technically objects with callable capabilities.

- Others: Built-in objects like Date, RegExp, Map, and Set

3. Node.js Specific: The Buffer Class:

The Buffer class is essential for handling binary data in Node.js, such as reading from files or processing network packets

- Function: It represents a fixed-length sequence of bytes allocated outside the V8 heap.

- Usage: Unlike standard JavaScript arrays, buffers are designed specifically for performance in I/O operations

Q. Explain String data type in Node.js?

Strings in Node.js are sequences of unicode characters. Strings can be declared using single quotes, double quotes, or backticks (template literals).

Key characteristics:

- Immutable — you cannot change individual characters in place

- Zero-indexed —

str[0]gives the first character - UTF-16 encoded internally

Common String methods:

| Method | Description |

|---|---|

charAt(i) |

Returns the character at index i |

concat() |

Joins two or more strings |

indexOf() |

Returns the index of a substring (-1 if not found) |

includes() |

Returns true if substring exists |

split() |

Splits a string into an array |

slice(start, end) |

Extracts a portion of the string |

toUpperCase() / toLowerCase() |

Changes case |

trim() |

Removes whitespace from both ends |

replace() |

Replaces a substring or regex match |

Example:

const str1 = "Hello";

const str2 = 'World';

const str3 = `${str1}, ${str2}!`; // template literal

console.log(str3); // Hello, World!

console.log(str1.length); // 5

console.log(str1.toUpperCase()); // HELLO

console.log(str3.includes('World')); // true

console.log(str3.split(', ')); // ['Hello', 'World!']

console.log(str1.concat(' ', str2)); // Hello World

Q. Explain Number data type in Node.js?

The number data type in Node.js is 64 bits floating point number both positive and negative. The parseInt() and parseFloat() functions are used to convert to number, if it fails to convert into a number then it returns NaN.

The Number data type in Node.js follows the IEEE 754 double-precision 64-bit floating-point standard (same as JavaScript). It represents both integers and decimals using the same type.

Key characteristics:

- Range: $\pm5 \times 10^{-324}$ to $\pm1.8 \times 10^{308}$

- Safe integer range: $-(2^{53}-1)$ to $2^{53}-1$ (use

Number.MAX_SAFE_INTEGER) - Special values:

Infinity,-Infinity,NaN - Both integers and floats are the same type — no

intvsfloatdistinction

Common utility functions:

| Function / Property | Description |

|---|---|

parseInt(str, radix) |

Parses string to integer |

parseFloat(str) |

Parses string to float |

Number(value) |

Converts a value to number |

isNaN(value) |

Checks if value is NaN |

isFinite(value) |

Checks if value is finite |

Number.isInteger(value) |

Checks if value is an integer |

Number.MAX_SAFE_INTEGER |

9007199254740991 (2⁵³ - 1) |

.toFixed(n) |

Formats to n decimal places (returns string) |

Example:

// Basic numbers

const int = 42;

const float = 3.14;

console.log(typeof int); // 'number'

console.log(typeof float); // 'number'

// Parsing

console.log(parseInt("32")); // 32

console.log(parseInt("0xFF", 16)); // 255 (hex)

console.log(parseFloat("8.24")); // 8.24

console.log(parseInt("abc")); // NaN

// Special values

console.log(5 / 0); // Infinity

console.log(-5 / 0); // -Infinity

console.log(0 / 0); // NaN

console.log(isNaN(NaN)); // true

console.log(isFinite(10 / 5)); // true

console.log(isFinite(10 / 0)); // false

// Precision

console.log((1.1 + 2.2).toFixed(2)); // "3.30" — floating point quirk

console.log(Number.MAX_SAFE_INTEGER); // 9007199254740991

// Type conversion

console.log(Number("42")); // 42

console.log(Number("")); // 0

console.log(Number("abc")); // NaN

console.log(Number(true)); // 1

console.log(Number(false)); // 0

console.log(Number(null)); // 0

For integers larger than

Number.MAX_SAFE_INTEGER, useBigIntto avoid precision loss.

Q. Explain BigInt data type in Node.js?

BigInt is a primitive type for representing integers of arbitrary precision — integers larger than $2^{53} - 1$ (Number.MAX_SAFE_INTEGER) where the regular Number type loses accuracy.

Two ways to create a BigInt:

const a = 9007199254740991n; // append 'n' to an integer literal

const b = BigInt(9007199254740991); // BigInt() function

const c = BigInt('9007199254740991'); // from string

Why it's needed:

// Regular Number loses precision beyond MAX_SAFE_INTEGER

console.log(9007199254740991 + 1); // 9007199254740992 ✓

console.log(9007199254740991 + 2); // 9007199254740992 ✗ (wrong!)

// BigInt stays accurate

console.log(9007199254740991n + 2n); // 9007199254740993n ✓

Example:

const x = 10n;

const y = 3n;

console.log(x + y); // 13n

console.log(x - y); // 7n

console.log(x * y); // 30n

console.log(x / y); // 3n (integer division — no decimals)

console.log(x % y); // 1n

console.log(x ** y); // 1000n

typeof 42n; // 'bigint'

Important restrictions:

// Cannot mix BigInt and Number directly

10n + 5; // TypeError

// Explicit conversion required

10n + BigInt(5); // 15n

Number(10n) + 5; // 15

// No decimal BigInt

1.5n; // SyntaxError

// Comparison with Number works (loose equality only)

42n == 42; // true (loose)

42n === 42; // false (strict — different types)

// JSON.stringify does NOT support BigInt natively

JSON.stringify(42n); // TypeError — use .toString() first

Common use cases in Node.js:

- Cryptographic operations with large integers

- Database IDs (e.g., PostgreSQL

bigintcolumns) - Financial calculations requiring exact integer arithmetic

- Timestamps in nanoseconds (

process.hrtime.bigint())

// Node.js built-in that returns BigInt

const start = process.hrtime.bigint();

// ... some work ...

const elapsed = process.hrtime.bigint() - start;

console.log(`Elapsed: ${elapsed} ns`);

Q. Explain Boolean data type in Node.js?

The Boolean data type has exactly two possible values: true or false. It is used for logical conditions, control flow, and flag variables. Node.js (like JavaScript) follows specific truthy/falsy rules when coercing other types to Boolean.

Falsy values — coerce to false:

| Value | Type |

|---|---|

false |

Boolean |

0, -0, 0n |

Number / BigInt |

"", '', ` `` ` |

Empty string |

null |

Null |

undefined |

Undefined |

NaN |

Number |

Everything else is truthy (including [], {}, "0", "false").

Common Boolean operators:

| Operator | Name | Example | Result |

|---|---|---|---|

&& |

Logical AND | true && false |

false |

\|\| |

Logical OR | true \|\| false |

true |

! |

Logical NOT | !true |

false |

?? |

Nullish coalescing | null ?? 'default' |

'default' |

Example:

/**

* Boolean Data Type

*/

// Literal boolean values

const isValid = true;

const isExpired = false;

console.log(typeof isValid); // 'boolean'

// Boolean() conversion

console.log(Boolean(1)); // true

console.log(Boolean(0)); // false

console.log(Boolean("hello")); // true

console.log(Boolean("")); // false

console.log(Boolean(null)); // false

console.log(Boolean(undefined)); // false

console.log(Boolean(NaN)); // false

console.log(Boolean([])); // true (empty array is truthy!)

console.log(Boolean({})); // true (empty object is truthy!)

// Logical operators

console.log(true && true); // true

console.log(true && false); // false

console.log(true || false); // true

console.log(false || false); // false

console.log(!true); // false

console.log(!false); // true

// Short-circuit evaluation

const user = null;

const name = user && user.name; // null (short-circuits, avoids error)

const role = user || 'guest'; // 'guest'

// Double negation — convert any value to boolean

console.log(!!1); // true

console.log(!!""); // false

console.log(!!null); // false

Avoid

new Boolean()— it creates a Boolean object (always truthy) rather than a primitive, which leads to confusing bugs. Always useBoolean()(withoutnew) or!!for type coercion.

Q. Explain Undefined and Null data type in Node.js?

undefined and null are two distinct primitive types in Node.js that both represent the absence of a value, but with different semantics.

undefined

- A variable that has been declared but not yet assigned a value is automatically

undefined. - It is also the return value of a function that has no

returnstatement. - Accessing a non-existent object property returns

undefined. typeof undefined === 'undefined'

null

- Represents the intentional absence of any object value — you explicitly set it.

- Often used to reset or clear a variable.

typeof null === 'object'— this is a long-standing JavaScript quirk.- Use strict equality (

===) to distinguishnullfromundefined.

Key differences:

undefined |

null |

|

|---|---|---|

| Set by | JavaScript engine (automatically) | Developer (intentionally) |

| Meaning | Variable declared but not assigned | Intentional absence of value |

typeof |

'undefined' |

'object' (quirk) |

Loose equality (==) |

null == undefined → true |

null == undefined → true |

Strict equality (===) |

null === undefined → false |

null === undefined → false |

| JSON serialization | Omitted from JSON output | Serialized as null |

Example:

/**

* Undefined and Null Data Types

*/

// --- undefined ---

let x;

console.log(x); // undefined

console.log(typeof x); // 'undefined'

function noReturn() {}

console.log(noReturn()); // undefined

const obj = { name: 'Node' };

console.log(obj.version); // undefined (property doesn't exist)

// Function parameter not passed

function greet(name) {

console.log(name);

}

greet(); // undefined

// --- null ---

let y = null;

console.log(y); // null

console.log(typeof y); // 'object' (historical JS quirk)

// Intentionally clearing a reference

let user = { name: 'Alice' };

user = null; // release the reference

// --- Equality comparisons ---

console.log(null == undefined); // true (loose — both "empty")

console.log(null === undefined); // false (strict — different types)

// --- Nullish coalescing (??) — guards against both null and undefined ---

const config = null;

const timeout = config ?? 3000;

console.log(timeout); // 3000

// --- Optional chaining (?.) — safe property access ---

const profile = null;

console.log(profile?.address?.city); // undefined (no error thrown)

// --- Checking for null or undefined ---

function isNullOrUndefined(val) {

return val == null; // true for both null and undefined

}

console.log(isNullOrUndefined(null)); // true

console.log(isNullOrUndefined(undefined)); // true

console.log(isNullOrUndefined(0)); // false

console.log(isNullOrUndefined('')); // false

Use

val == nullas a concise guard for bothnullandundefined. Useval === nullorval === undefinedwhen you need to distinguish between them.

Q. Explain Symbol data type in Node.js?

Symbol is a primitive data type introduced in ES6. Every Symbol() call returns a guaranteed unique value — no two symbols are ever equal, even if they share the same description. Symbols are commonly used as unique, non-colliding property keys on objects.

Key characteristics:

- Always unique —

Symbol('x') !== Symbol('x') - Immutable and primitive —

typeof Symbol() === 'symbol' - Not auto-converted to strings — explicit

.toString()or.descriptionneeded - Not enumerable by default — hidden from

for...in,Object.keys(), andJSON.stringify() - Accessible via

Object.getOwnPropertySymbols()

Creating Symbols:

const sym1 = Symbol(); // no description

const sym2 = Symbol('id'); // with description (for debugging)

const sym3 = Symbol('id'); // different symbol — NOT equal to sym2

console.log(sym2 === sym3); // false — always unique

console.log(typeof sym2); // 'symbol'

console.log(sym2.description); // 'id'

console.log(sym2.toString()); // 'Symbol(id)'

Use case 1 — unique object property keys (avoid naming collisions):

/**

* Symbol as object property key

*/

const ID = Symbol('id');

const NAME = Symbol('name');

const user = {

[ID]: 101,

[NAME]: 'Alice',

role: 'admin',

};

console.log(user[ID]); // 101

console.log(user[NAME]); // 'Alice'

// Symbols are invisible to standard enumeration

console.log(Object.keys(user)); // ['role']

console.log(JSON.stringify(user)); // '{"role":"admin"}'

// Access symbol keys explicitly

console.log(Object.getOwnPropertySymbols(user)); // [ Symbol(id), Symbol(name) ]

Use case 2 — constants / enum-like values:

// Symbols guarantee uniqueness, unlike string constants

const Direction = {

UP: Symbol('UP'),

DOWN: Symbol('DOWN'),

LEFT: Symbol('LEFT'),

RIGHT: Symbol('RIGHT'),

};

function move(dir) {

if (dir === Direction.UP) console.log('Moving up');

}

move(Direction.UP); // Moving up

move(Symbol('UP')); // no output — different symbol, even with same description

Use case 3 — Symbol.for() and the global symbol registry:

// Symbol.for() creates/retrieves a shared symbol from a global registry

const s1 = Symbol.for('shared');

const s2 = Symbol.for('shared');

console.log(s1 === s2); // true — same registry entry

console.log(Symbol.keyFor(s1)); // 'shared'

// Regular Symbol() is NOT in the registry

const local = Symbol('shared');

console.log(local === s1); // false

Use case 4 — Well-known Symbols (customize built-in behavior):

// Symbol.iterator — make an object iterable

const range = {

from: 1,

to: 5,

[Symbol.iterator]() {

let current = this.from;

const last = this.to;

return {

next() {

return current <= last

? { value: current++, done: false }

: { value: undefined, done: true };

},

};

},

};

console.log([...range]); // [1, 2, 3, 4, 5]

Well-known Symbols overview:

| Symbol | Purpose |

|---|---|

Symbol.iterator |

Defines the default iterator (for...of, spread) |

Symbol.toPrimitive |

Customizes type conversion |

Symbol.hasInstance |

Customizes instanceof behavior |

Symbol.toStringTag |

Customizes Object.prototype.toString output |

Symbol.asyncIterator |

Defines the async iterator (for await...of) |

Q. Explain function in Node.js?

Functions are first-class citizens in Node.js — they can be assigned to variables, passed as arguments, returned from other functions, and stored in data structures. Node.js supports several function forms, each suited to different use cases.

Function types:

| Type | Syntax | this binding |

arguments object |

Hoisted? |

|---|---|---|---|---|

| Function declaration | function foo() {} |

Dynamic | Yes | Yes |

| Function expression | const foo = function() {} |

Dynamic | Yes | No |

| Arrow function | const foo = () => {} |

Lexical (inherited) | No | No |

| Async function | async function foo() {} |

Dynamic | Yes | Yes |

| Generator function | function* foo() {} |

Dynamic | Yes | Yes |

1. Function declaration (hoisted):

// Can be called before the declaration

greet('World'); // Hello, World!

function greet(name) {

console.log(`Hello, ${name}!`);

}

2. Function expression:

const square = function(n) {

return n * n;

};

console.log(square(5)); // 25

3. Arrow function (no own this):

const add = (a, b) => a + b;

console.log(add(3, 4)); // 7

// Useful in callbacks — inherits `this` from enclosing scope

const timer = {

seconds: 0,

start() {

setInterval(() => {

this.seconds++; // `this` refers to `timer`, not the interval

}, 1000);

},

};

4. Default parameters:

function createUser(name, role = 'user', active = true) {

return { name, role, active };

}

console.log(createUser('Alice')); // { name: 'Alice', role: 'user', active: true }

console.log(createUser('Bob', 'admin')); // { name: 'Bob', role: 'admin', active: true }

5. Rest parameters and spread:

function sum(...numbers) {

return numbers.reduce((acc, n) => acc + n, 0);

}

console.log(sum(1, 2, 3, 4)); // 10

const nums = [1, 2, 3];

console.log(sum(...nums)); // 6

6. Functions as first-class citizens (higher-order functions):

// Function passed as argument (callback pattern)

function applyOperation(a, b, operation) {

return operation(a, b);

}

const multiply = (x, y) => x * y;

console.log(applyOperation(4, 5, multiply)); // 20

// Function returning a function (closure)

function multiplier(factor) {

return (number) => number * factor;

}

const double = multiplier(2);

const triple = multiplier(3);

console.log(double(7)); // 14

console.log(triple(7)); // 21

7. Async function (returns a Promise):

async function fetchData(url) {

try {

const response = await fetch(url);

const data = await response.json();

return data;

} catch (err) {

console.error('Fetch failed:', err.message);

}

}

8. IIFE (Immediately Invoked Function Expression):

// Creates a private scope — useful for module-like isolation

const result = (function() {

const secret = 42;

return { getValue: () => secret };

})();

console.log(result.getValue()); // 42

9. Generator function:

function* idGenerator() {

let id = 1;

while (true) {

yield id++;

}

}

const gen = idGenerator();

console.log(gen.next().value); // 1

console.log(gen.next().value); // 2

console.log(gen.next().value); // 3

Q. How to work with Buffers in Node.js?

A Buffer is a fixed-size chunk of memory allocated outside the V8 heap, designed to handle raw binary data — file I/O, network packets, cryptographic operations, and image processing. Unlike JavaScript strings (UTF-16), Buffers store raw bytes.

Note: The old

new Buffer()constructor was deprecated and removed in Node.js v22. Always useBuffer.alloc(),Buffer.allocUnsafe(), orBuffer.from().

Buffer creation methods:

| Method | Description |

|---|---|

Buffer.alloc(size) |

Zero-filled buffer of size bytes (safe) |

Buffer.allocUnsafe(size) |

Uninitialized buffer — faster but may contain old data |

Buffer.from(string, encoding) |

Buffer from a string |

Buffer.from(array) |

Buffer from an array of byte values |

Buffer.from(buffer) |

Copy of an existing Buffer |

Buffer.concat(list) |

Concatenate multiple Buffers into one |

1. Allocating Buffers:

// Zero-filled (safe — always use this for sensitive data)

const safe = Buffer.alloc(8);

console.log(safe); // <Buffer 00 00 00 00 00 00 00 00>

// Uninitialized (faster, but may contain garbage — never expose without filling)

const unsafe = Buffer.allocUnsafe(8);

unsafe.fill(0); // manually zero it out before use

2. Creating from strings:

const buf = Buffer.from('Hello, Node.js', 'utf8');

console.log(buf.toString()); // Hello, Node.js (default: utf8)

console.log(buf.toString('hex')); // 48656c6c6f2c204e6f64652e6a73

console.log(buf.toString('base64')); // SGVsbG8sIE5vZGUuanM=

console.log(buf.length); // 14 (bytes, not characters)

Supported encodings: 'utf8', 'ascii', 'base64', 'base64url', 'hex', 'latin1', 'binary', 'ucs2'

3. Creating from byte arrays:

const buf = Buffer.from([72, 101, 108, 108, 111]); // ASCII codes

console.log(buf.toString()); // Hello

4. Reading and writing bytes:

const buf = Buffer.alloc(4);

// Write individual bytes

buf[0] = 0x4e; // 'N'

buf[1] = 0x6f; // 'o'

buf[2] = 0x64; // 'd'

buf[3] = 0x65; // 'e'

console.log(buf.toString()); // Node

// Read/write multi-byte integers (useful for binary protocols)

const numBuf = Buffer.alloc(4);

numBuf.writeUInt32BE(305419896, 0); // big-endian

console.log(numBuf.readUInt32BE(0)); // 305419896

5. Slicing and copying:

const buf = Buffer.from('Node.js is great');

// subarray() — returns a view (shares memory, does NOT copy)

const view = buf.subarray(0, 7);

console.log(view.toString()); // Node.js

// copy() — copies bytes into another buffer

const dest = Buffer.alloc(7);

buf.copy(dest, 0, 0, 7);

console.log(dest.toString()); // Node.js

// Mutating the view also mutates the original

view[0] = 0x58; // 'X'

console.log(buf.toString()); // Xode.js is great ← shared memory!

6. Concatenating Buffers:

const part1 = Buffer.from('Hello, ');

const part2 = Buffer.from('Node.js!');

const combined = Buffer.concat([part1, part2]);

console.log(combined.toString()); // Hello, Node.js!

7. Comparing Buffers:

const a = Buffer.from('ABC');

const b = Buffer.from('ABC');

const c = Buffer.from('XYZ');

console.log(a.equals(b)); // true

console.log(a.equals(c)); // false

console.log(Buffer.compare(a, c)); // -1 (a < c lexicographically)

8. Real-world use — reading a file as a Buffer:

const fs = require('fs');

fs.readFile('image.png', (err, data) => {

// data is a Buffer containing raw binary file contents

console.log(`File size: ${data.length} bytes`);

console.log(`First 4 bytes (PNG magic number): ${data.subarray(0, 4).toString('hex')}`);

// PNG files always start with: 89504e47

});

Buffer vs string performance:

- Use Buffer for binary data (files, sockets, crypto)

- Use string for text data

- Converting between them has a cost — minimize unnecessary conversions in hot paths

Q. What is the difference between CommonJS and ES Modules in Node.js?

Node.js supports two module systems: CommonJS (CJS) — the original system — and ES Modules (ESM) — the modern JavaScript standard

Feature comparison:

| Feature | CommonJS (CJS) | ES Modules (ESM) |

|---|---|---|

| Syntax | require() / module.exports |

import / export |

| Loading | Synchronous (blocking) | Asynchronous (deferred) |

| File extension | .js or .cjs |

.mjs or .js with "type":"module" |

__dirname / __filename |

Available | Not available — use import.meta |

Top-level await |

Not supported | Supported |

| Tree shaking | Not supported | Supported by bundlers |

| Named exports | Via destructuring require |

Native export keyword |

| Default in Node.js | Yes | Opt-in ("type":"module" in package.json) |

require() inside ESM |

Not available by default | Via createRequire() |

| Dynamic import | require() (sync) |

import() (async, returns Promise) |

1. CommonJS — exporting and importing:

// math.js (CJS)

function add(a, b) { return a + b; }

function subtract(a, b) { return a - b; }

// Named exports via object

module.exports = { add, subtract };

// OR default export pattern

module.exports = add;

// app.js (CJS)

const { add, subtract } = require('./math');

console.log(add(2, 3)); // 5

console.log(subtract(5, 2)); // 3

2. ES Modules — named and default exports:

// math.mjs (ESM) — named exports

export function add(a, b) { return a + b; }

export function subtract(a, b) { return a - b; }

export const PI = 3.14159;

// Default export

export default function multiply(a, b) { return a * b; }

// app.mjs (ESM)

import multiply, { add, subtract, PI } from './math.mjs';

console.log(add(2, 3)); // 5

console.log(subtract(5, 2)); // 3

console.log(multiply(4, 5)); // 20

console.log(PI); // 3.14159

3. Enabling ESM in a project:

// package.json — treat all .js files as ESM

{

"type": "module"

}

Or use .mjs extension for individual files without changing package.json.

4. __dirname and __filename equivalent in ESM:

// ESM does not have __dirname — use import.meta.url instead

import { fileURLToPath } from 'url';

import { dirname, join } from 'path';

const __filename = fileURLToPath(import.meta.url);

const __dirname = dirname(__filename);

console.log(__dirname); // current directory

console.log(join(__dirname, 'data.json')); // absolute path to a file

5. Top-level await — ESM only:

// app.mjs — await at the top level (no async wrapper needed)

const data = await fetch('https://api.example.com/config').then(r => r.json());

console.log(data);

// app.js (CJS) — must wrap in async function

(async () => {

const data = await fetch('https://api.example.com/config').then(r => r.json());

console.log(data);

})();

6. Using require() inside ESM (interop):

// ESM file that needs to require() a CJS module

import { createRequire } from 'module';

const require = createRequire(import.meta.url);

const lodash = require('lodash'); // works in ESM via createRequire

7. Dynamic import — works in both CJS and ESM:

// Lazy-load a module only when needed (returns a Promise)

async function loadModule() {

const { add } = await import('./math.mjs');

console.log(add(1, 2)); // 3

}

loadModule();

Recommendation: Use ESM (

import/export) for new projects — it supports top-levelawait, tree shaking, and is the official JavaScript standard. Use CommonJS only when working with legacy codebases or packages that don’t yet support ESM.

Q. How does module caching work in Node.js?

When require() loads a module for the first time, Node.js executes the module file and caches the exported object in require.cache. Every subsequent require() call for the same file path returns the cached exports directly, without re-executing the file.

This means:

- Module-level code (e.g., a database connection) runs only once.

- Mutating an exported object in one module is visible to all other modules that required it.

Example — cache in action:

// counter.js

let count = 0;

module.exports = {

increment() { count++; },

get() { return count; },

};

// app.js

const a = require('./counter');

const b = require('./counter'); // same cached reference

a.increment();

a.increment();

console.log(a === b); // true — exact same object

console.log(b.get()); // 2 — b sees mutations made via a

Inspecting the cache:

// Print all cached module paths

console.log(Object.keys(require.cache));

// Delete a module from cache (forces re-execution on next require)

delete require.cache[require.resolve('./counter')];

When is caching NOT used?

require()with different resolved paths (e.g., case sensitivity on Linux, symlinks) creates separate cache entries.- ES Modules (

import) also cache, but their cache is separate from the CommonJS cache. - Explicitly deleting from

require.cachebypasses it (useful in tests to get a fresh module).

Practical use — singleton pattern:

// db.js — connection is created only once, shared across the app

const { Pool } = require('pg');

const pool = new Pool({ connectionString: process.env.DATABASE_URL });

module.exports = pool; // cached: every require('./db') returns the same Pool

# 6. NODE.JS EVENTS

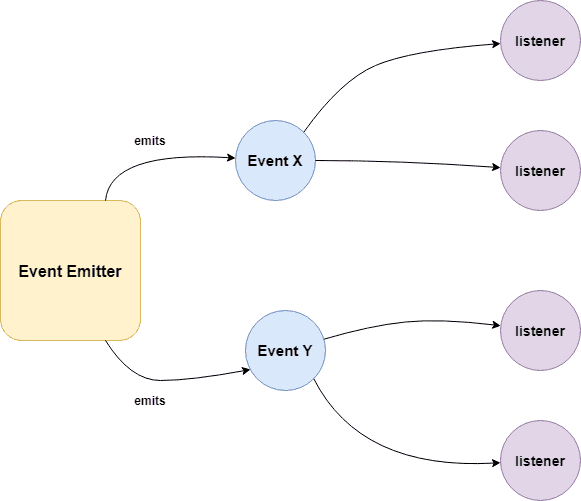

Q. What is EventEmitter in Node.js?

EventEmitter is a class from Node.js's built-in events module that implements the Observer (Pub/Sub) pattern — objects can emit named events, and listeners registered for those events are called when they fire.

EventEmitter is at the core of Node asynchronous event-driven architecture. Many of Node's built-in modules inherit from EventEmitter including prominent frameworks like Express.js. An emitter object basically has two main features:

- Emitting name events.

- Registering and unregistering listener functions.

Example:

/**

* Callback Events with Parameters

*/

const events = require('events');

const eventEmitter = new events.EventEmitter();

function listener(code, msg) {

console.log(`status ${code} and ${msg}`);

}

eventEmitter.on('status', listener); // Register listener

eventEmitter.emit('status', 200, 'ok');

// Output

status 200 and ok

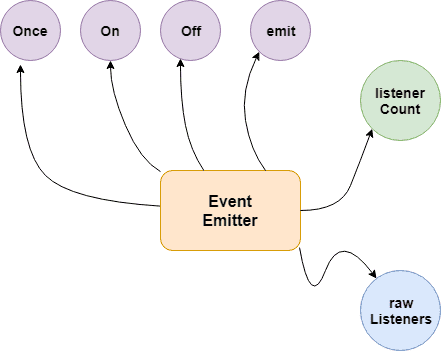

Key Methods

| Method | Description |

|---|---|

.on(event, listener) |

Register a listener (fires every time) |

.once(event, listener) |

Register a listener that fires only once |

.emit(event, ...args) |

Trigger all listeners for an event |

.off(event, listener) |

Remove a specific listener |

.removeAllListeners(event) |

Remove all listeners for an event |

.listeners(event) |

Returns array of listeners for an event |

.listenerCount(event) |

Returns number of listeners |

.setMaxListeners(n) |

Change the limit (default: 10) |

Node.js prints a warning when more than 10 listeners are registered for a single event — use

emitter.setMaxListeners(0)to disable the limit, or increase it as needed.

Q. How does the EventEmitter works in Node.js?

EventEmitter maintains an internal registry (a plain object) mapping event names to arrays of listener functions. When you emit an event, it looks up that array and calls each function synchronously in registration order.

- Event Emitter emits the data in an event called message

- A Listened is registered on the event message

- when the message event emits some data, the listener will get the data

Building Blocks:

- .emit() - this method in event emitter is to emit an event in module

- .on() - this method is to listen to data on a registered event in node.js

- .once() - it listen to data on a registered event only once.

- .addListener() - it checks if the listener is registered for an event.

- .removeListener() - it removes the listener for an event.

Example 01:

/**

* Callbacks Events

*/

const events = require('events');

const eventEmitter = new events.EventEmitter();

function listenerOne() {

console.log('First Listener Executed');

}

function listenerTwo() {

console.log('Second Listener Executed');

}

eventEmitter.on('listenerOne', listenerOne); // Register for listenerOne

eventEmitter.on('listenerOne', listenerTwo); // Register for listenerOne

// When the event "listenerOne" is emitted, both the above callbacks should be invoked.

eventEmitter.emit('listenerOne');

// Output

First Listener Executed

Second Listener Executed

Example 02:

/**

* Emit Events Once

*/

const events = require('events');

const eventEmitter = new events.EventEmitter();

function listenerOnce() {

console.log('listenerOnce fired once');

}

eventEmitter.once('listenerOne', listenerOnce); // Register listenerOnce

eventEmitter.emit('listenerOne');

// Output

listenerOnce fired once

Q. What are the EventEmitter methods available in Node.js?

Listener Registration

| Method | Description |

|---|---|

.on(event, listener) |

Add a listener — fires every time the event is emitted |

.addListener(event, listener) |

Alias for .on() |

.once(event, listener) |

Add a listener that fires only once, then auto-removes |

.prependListener(event, listener) |

Add listener to the beginning of the array (fires first) |

.prependOnceListener(event, listener) |

Same as .prependListener() but fires only once |

Listener Removal

| Method | Description |

|---|---|

.off(event, listener) |

Remove a specific listener |

.removeListener(event, listener) |

Alias for .off() |

.removeAllListeners([event]) |

Remove all listeners for an event (or all events if omitted) |

Emitting Events

| Method | Description |

|---|---|

.emit(event, ...args) |

Trigger all listeners for the event synchronously. Returns true if listeners exist |

Inspection

| Method | Description |

|---|---|

.listeners(event) |

Returns a copy of the listeners array for the event |

.rawListeners(event) |

Returns raw listeners including .once() wrappers |

.listenerCount(event) |

Returns the number of listeners registered for an event |

.eventNames() |

Returns an array of all event names with registered listeners |

Configuration

| Method | Description |

|---|---|

.setMaxListeners(n) |

Set the max listener limit (default: 10). Use 0 for unlimited |

.getMaxListeners() |

Returns the current max listener limit |

Code Examples

const EventEmitter = require('events');

const emitter = new EventEmitter();

// --- Registration ---

emitter.on('data', (msg) => console.log('on:', msg));

emitter.once('data', (msg) => console.log('once:', msg));

emitter.prependListener('data', (msg) => console.log('prepend:', msg));

emitter.emit('data', 'hello');

// prepend: hello ← fired first

// on: hello

// once: hello

emitter.emit('data', 'world');

// prepend: world

// on: world ← once() is already removed

// --- Inspection ---

console.log(emitter.listenerCount('data')); // 2

console.log(emitter.eventNames()); // ['data']

// --- Removal ---

const handler = (x) => console.log(x);

emitter.on('click', handler);

emitter.off('click', handler); // removes specific listener

emitter.removeAllListeners('data'); // removes all 'data' listeners

emitter.removeAllListeners(); // removes everything

// --- Max listeners ---

emitter.setMaxListeners(20);

console.log(emitter.getMaxListeners()); // 20

// --- rawListeners (includes once wrapper) ---

emitter.once('ping', () => {});

console.log(emitter.rawListeners('ping')); // [Function: bound onceWrapper]

console.log(emitter.listeners('ping')); // [Function (anonymous)] ← unwrapped

Always call

.off()or.removeAllListeners()when a listener is no longer needed — especially inside long-lived objects — to prevent memory leaks.

Q. How the Event Loop Works in Node.js?

The Event Loop is the mechanism that allows Node.js to perform non-blocking I/O on a single thread by delegating operations to the OS kernel or libuv thread pool, then executing callbacks when they complete.

Node.js is a single-threaded application, but it can support concurrency via the concept of event and callbacks. Every API of Node.js is asynchronous and being single-threaded, they use async function calls to maintain concurrency. Node uses observer pattern. Node thread keeps an event loop and whenever a task gets completed, it fires the corresponding event which signals the event-listener function to execute.

Features of Event Loop:

- Event loop is an endless loop, which waits for tasks, executes them and then sleeps until it receives more tasks.

- The event loop executes tasks from the event queue only when the call stack is empty i.e. there is no ongoing task.

- The event loop allows us to use callbacks and promises.

- The event loop executes the tasks starting from the oldest first.

The Six Main Phases

| Phase | Description |

|---|---|

| Timers | Executes callbacks from setTimeout() and setInterval() once their minimum threshold has passed. |

| Pending Callbacks | Handles certain system-level error callbacks, such as TCP errors (e.g., ECONNREFUSED). |

| Idle, Prepare | Used only internally by Node.js for housekeeping. |

| Poll | The heart of the loop. It retrieves new I/O events (network, disk) and executes their callbacks immediately. |

| Check | Executes setImmediate() callbacks, which are specifically designed to run right after the Poll phase. |

| Close Callbacks | Handles the closing of resources, like socket.on(‘close’), stream.on(‘close’) |

Between every phase, Node.js drains microtasks in this order:

process.nextTickqueue- Promise

.then/.catchqueue

Example:

console.log('1. sync');

setTimeout(() => console.log('2. setTimeout'), 0);

setImmediate(() => console.log('3. setImmediate'));

Promise.resolve().then(() => console.log('4. Promise'));

process.nextTick(() => console.log('5. nextTick'));

console.log('6. sync end');

// Output:

// 1. sync

// 6. sync end

// 5. nextTick ← microtask (highest priority)

// 4. Promise ← microtask

// 2. setTimeout ← timers phase

// 3. setImmediate ← check phase

Q. How are event listeners created in Node.JS?

An array containing all eventListeners is maintained by Node.js. Each time .on(), .once(), or .addListener() function is executed, a new event listener is added to that array. When the concerned event is emitted, each eventListener that is present in the array is called in a sequential or synchronous manner.

The event listeners are called in a synchronous manner to avoid logical errors, race conditions etc. The total number of listeners that can be registered for a particular event, is controlled by .setMaxListeners(n). The default number of listeners is 10.

emitter.setMaxListeners(12);

Example: .on() — persistent listener

const EventEmitter = require('events');

const emitter = new EventEmitter();

// Fires every time the event is emitted

emitter.on('message', (data) => {

console.log('Received:', data);

});

emitter.emit('message', 'hello'); // Received: hello

emitter.emit('message', 'world'); // Received: world

Example: .once() — single-fire listener

// Auto-removes itself after first call

emitter.once('connect', () => {

console.log('Connected!');

});

emitter.emit('connect'); // Connected!

emitter.emit('connect'); // (nothing — listener is gone)

As an event Listener once registered, exists throughout the life cycle of the program. It is important to detach an event Listener once its no longer needed to avoid memory leaks. Functions like .removeListener(), .removeAllListeners() enable the removal of listeners from the listeners Array.

Example: removeAllListeners()

// In long-lived objects, clean up to prevent memory leaks

class Connection extends EventEmitter {

destroy() {

this.removeAllListeners(); // clean up everything

}

}

Q. What is the difference between process.nextTick() and setImmediate()?

1. process.nextTick():

The process.nextTick() method adds the callback function to the start of the next event queue. It is to be noted that, at the start of the program process.nextTick() method is called for the first time before the event loop is processed.

2. setImmediate():

The setImmediate() method is used to execute a function right after the current event loop finishes. It is callback function is placed in the check phase of the next event queue.

Example: Execution Order

/**

* setImmediate() and process.nextTick()

*/

setImmediate(() => console.log('1. setImmediate'));

process.nextTick(() => console.log('2. nextTick'));

Promise.resolve().then(() => console.log('3. Promise'));

console.log('4. sync');

// Output:

// 4. sync

// 2. nextTick ← microtask, runs before event loop phases

// 3. Promise ← microtask, runs after nextTick queue

// 1. setImmediate ← check phase

Example: Inside an I/O Callback

const fs = require('fs');

fs.readFile(__filename, () => {

setTimeout(() => console.log('1. setTimeout'), 0);

setImmediate(() => console.log('2. setImmediate'));

process.nextTick(() => console.log('3. nextTick'));

});

// Output:

// 3. nextTick ← always first (microtask)

// 2. setImmediate ← always before setTimeout inside I/O

// 1. setTimeout

When to Use Each

- process.nextTick() — when you need a callback to fire before any I/O in the current operation completes (e.g., emitting errors after constructor returns)

- setImmediate() — when you want to execute after I/O events in the current loop iteration, without blocking I/O

Q. What are the phases of the Node.js Event Loop?

The Node.js event loop processes asynchronous callbacks in a specific order across six phases. Each phase has a FIFO queue of callbacks to execute.

┌───────────────────────────┐

┌─>│ timers │ ← setTimeout / setInterval callbacks

│ └─────────────┬─────────────┘

│ ┌─────────────┴─────────────┐

│ │ pending callbacks │ ← I/O errors deferred to next loop

│ └─────────────┬─────────────┘

│ ┌─────────────┴─────────────┐

│ │ idle, prepare │ ← internal use only

│ └─────────────┬─────────────┘

│ ┌─────────────┴─────────────┐

│ │ poll │ ← retrieve new I/O events; execute I/O callbacks

│ └─────────────┬─────────────┘

│ ┌─────────────┴─────────────┐

│ │ check │ ← setImmediate callbacks

│ └─────────────┬─────────────┘

│ ┌─────────────┴─────────────┐

└──┤ close callbacks │ ← e.g., socket.on('close', ...)

└───────────────────────────┘

| Phase | What runs here |

|---|---|

| timers | setTimeout and setInterval callbacks whose delay has expired |

| pending callbacks | I/O callbacks deferred from the previous iteration |

| idle / prepare | Internal Node.js use only |

| poll | Retrieves new I/O events; executes I/O-related callbacks (file, network) |

| check | setImmediate callbacks |

| close callbacks | socket.destroy(), stream.on('close') handlers |

Between every phase (and between every individual callback in some phases), Node.js drains the microtask queues:

process.nextTickqueue (highest priority)- Promise

.then/.catch/.finallyqueue

Example — phase execution order:

const fs = require('fs');

// Phase: timers

setTimeout(() => console.log('1. setTimeout'), 0);

// Phase: check

setImmediate(() => console.log('2. setImmediate'));

// Phase: poll (I/O callback)

fs.readFile(__filename, () => {

console.log('3. fs.readFile callback');

// Inside I/O callback: setImmediate always before setTimeout

setTimeout(() => console.log('4. setTimeout inside I/O'), 0);

setImmediate(() => console.log('5. setImmediate inside I/O'));

});

// Microtask: nextTick (runs before any phase)

process.nextTick(() => console.log('6. process.nextTick'));

// Microtask: Promise (runs after nextTick queue empties)

Promise.resolve().then(() => console.log('7. Promise.then'));

console.log('8. Synchronous code');

// Output order:

// 8. Synchronous code

// 6. process.nextTick

// 7. Promise.then

// 1. setTimeout (order of 1 & 2 may vary outside I/O)

// 2. setImmediate

// 3. fs.readFile callback

// 5. setImmediate inside I/O (always before setTimeout inside I/O)

// 4. setTimeout inside I/O

Key rules to remember: